How to Split Big Prompts into Smaller Chunks for AI Processing

Introduction

AI models like GPT work with relatively long prompts. However, such prompts can exceed token limits, become less accurate, or be set up to produce less coherent responses. Therefore, breaking them down into smaller and more meaningful prompts helps with better performance, coherence, and efficiency. This guide will explore the best ways to elegantly split off large prompts with the help of a piece of software called GPT Prompt Splitter.

Try our tool

Split your prompts easily with our free online tool

Reasons for Splitting Big Prompts

1. Token Limitations in AI Models

AI models have a token limitation (e.g., max token count in GPT-4). If a prompt exceeds this limit, it is truncated, and the response becomes incomplete or wrong.

2. Context and Coherence Maintenance

Long prompts tend to create confusion for the model and lower the quality of response. Smaller chunks help maintain clarity and relevance.

3. Performance Optimization

Working on large prompts consumes more resources. Therefore, splitting them into chunks makes AI interaction faster and more efficient.

Best Methods for Splitting Big Prompts

1. Identify Natural Breakpoints

Look for logical divisions such as:

- Paragraphs

- Sentence boundaries

- Section headers

2. Conduct Thematic Segmentation

Group similar ideas into separate chunks. For example, instead of one long product description, break it out into features, pricing, and benefits.

3. Utilize Prompt Structuring Techniques

Some ways that may work well include:

- Question-based chunking: Dissecting a query into many concrete sub-questions.

- Step-by-step processing: Providing gradual instructions instead of one long command.

Automation in Splitting via GPT Prompt Splitter

1. What is GPT Prompt Splitter?

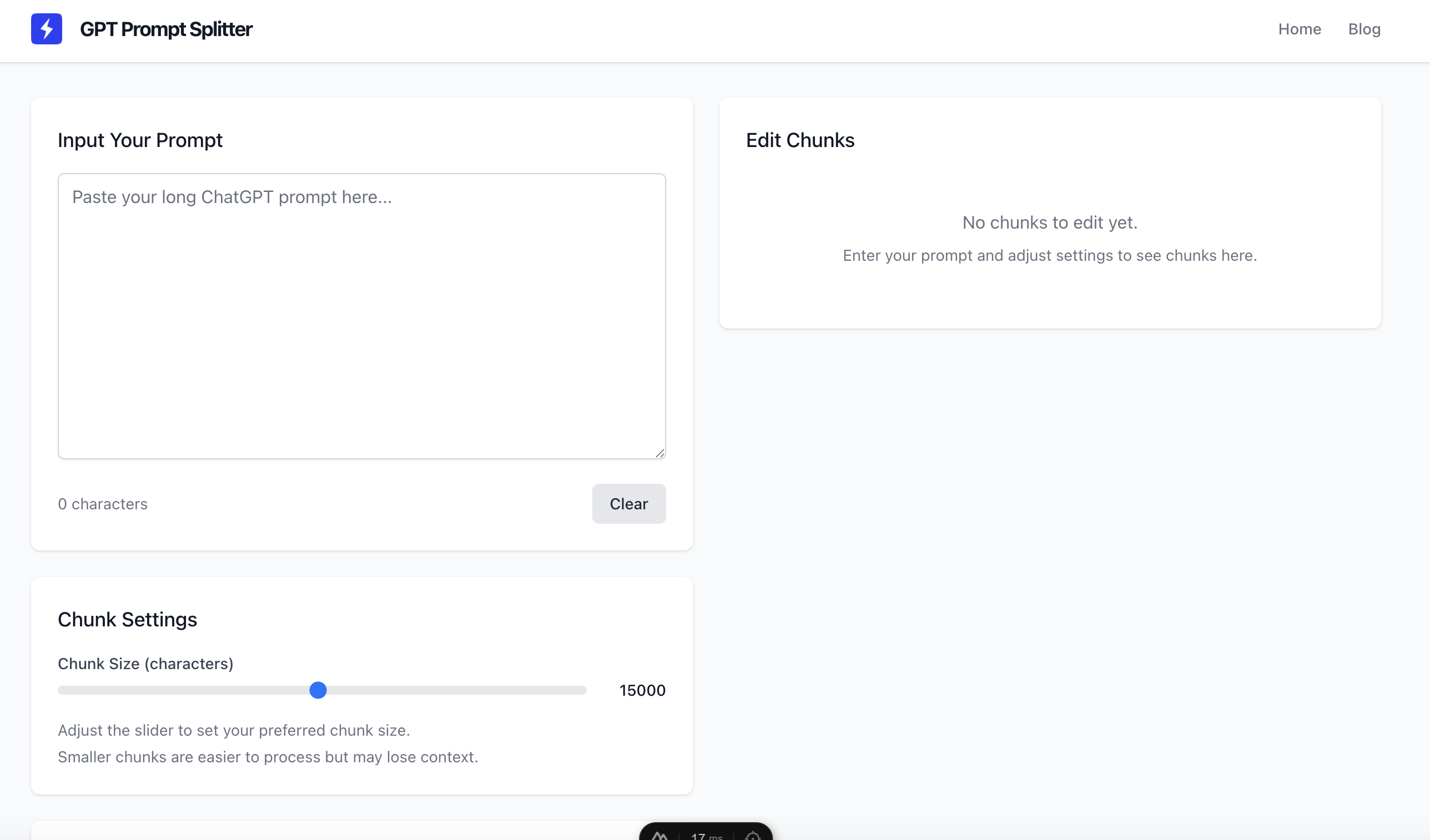

GPT Prompt Splitter is a large-prompt segmentation tool whose main purpose is to divide the prompt into chunks because the AI model processes those chunks better.

2. How GPT Prompt Splitter Works

It analyzes the input text for logical breakpoints, splits the input into smaller chunks according to context, sentence structure, and length, and makes sure that those smaller pieces maintain logical flow to preserve meaning and coherence.

3. Key Features

- Automatic token calculation

- Context-aware segmentation

- Customizable chunk sizes

- Supports various AI models

Best Practices for Optimizing Your Prompt Splitting

1. Stay on Course for the Purpose of Every Prompt

Each chunk ought to have a specified purpose: whether this is to answer a question, summarize some contents, or give directions.

2. Maintain Consistent Formatting

Create bullet points, numbered lists, and subheadings that keep the prompts structured and easy to follow.

3. Test and Refine Splits

Use experimentations to gauge the effectiveness of several splitting techniques for your particular AI application.

Conclusion

Smaller chunking of large prompts would speed up much under any circumstances, improve efficiency, response accuracy, and coherence of AI applications; whether the split is implemented manually or through an automation tool such as GPT Prompt Splitter. Optimization for AI workflows should therefore begin with structured prompt segmentation!

Try our tool

Split your prompts easily with our free online tool